Introduction

With the rapid growth in network traffic, network operations teams need to find a way to keep their infrastructure agile and robust. Long gone are the days of network changes taking days or weeks to complete. The best way to meet these ever-changing business requirements is to pair a network operating system (NOS) that has automation as one of its foundational principles, along with a robust network automation framework. In this blog, I will walk you through setting up an entire network automation pipeline utilizing Ansible and GitLab to provision an ArcOS EVPN VxLAN fabric.

Automation Framework Attributes

Before we jump into the set of tools needed to make operator workflows more dynamic, it is good to define a core set of attributes that any useful automation framework should have:

- Simple – must be useable and easy to learn for all members of the network team

- Scalable – must be able to support an entire network domain

- Repeatable – each process should be streamlined for operators and produce consistent results

- Efficient – should significantly remove errors seen when humans are configuring devices via legacy CLI practices

DevOps to NetDevOps

The good news is that those above goals are not unique to scaling and building robust network architectures. These are similar goals that were top of mind when the DevOps methodology and culture started to take hold in IT organizations. The culture shift that DevOps requires – increased communication among teams, ability to iterate quickly, automate testing, making process replicable (to name a few), are the exact same principles that network operators need to embrace to successfully migrate to this new automated paradigm. To pair with that culture change, NetDevOps also employs the following concepts

- Infrastructure as Code (IaC) — This is the process of managing and provisioning all types of infrastructure through definition files stored in a central location – a single source of truth. Traditionally, network changes had to be done manually and individually. Abstracting configurations into a machine-readable form, or “code”, allows the operator to reuse and repurpose configurations allowing for more efficient usage of network resources.

- Testing — Leveraging VMs and containers, operators can build topologies that mirror production networks, then use these topologies for testing and verification of the proposed configuration changes.

- Automation Toolsets — There has been considerable investment from all the open-source DevOps toolsets (Ansible, Puppet, Chef, Salt) to support network infrastructure changes. Network operators can benefit from using these hardened tools to handle all the underlying connection requirements, allowing for more focus on transforming their configurations into re-usable data models.

ArcOS Automation Attributes

The ArcOS architecture allows for an easy transition from the traditional CLI-based configuration approach to that of an automated workflow. Its OpenConfig based data-model has a consistent API for all northbound interfaces, giving operators flexibility in their deployment workflows. ArcOS supports full config parity across all programmatic interfaces, including NETCONF/RESTCONF, python-based APIs, and open-source NetDevOps tools sets.

Automating ArcOS with Ansible

While, there are a lot of different toolsets to choose from in the NetDevOps world, Ansible is the most popular for network configuration management. Ansible’s popularity is due to a few fundamental design choices, including but not limited to:

- The ability to leverage a secure transport and authentication scheme most likely already enabled within operations (e.g., SSH)

- An agent-less approach

- Minimal dependencies required on the managed entity (e.g., Python)

- The ability to declaratively express “plays” and “tasks” in an abstract yet simple way without vast programming knowledge

Having deployed many of the DevOps tools in production environments, I have found that Ansible is the easiest to operationalize. Ansible is also easier to phase-in to an existing environment allowing for quick automation wins.

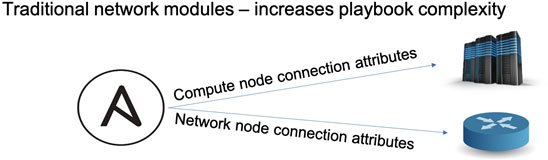

The ArcOS Ansible integration leverages Debian/ONL kernel that is deployed with ArcOS, allowing for ArcOS devices to be provisioned like a compute node. With most other Network Operating Systems, as shown below, an operator would have to manage two or more different set of connections – one for the compute infrastructure and another for the network devices, which results in overly complex playbooks.

Fig. Traditional NOS Ansible Modules vs ArcOS Ansible Modules

Now compare that with the ArcOS Ansible modules shown on the right, which leverage the default Ansible connection attributes. This ‘first-class citizen’ approach gives the operator the ability to drop-in ArcOS modules into existing compute playbooks and to extend tasks to the network infrastructure very efficiently and seamlessly.

There are two main ArcOS modules,

- arcos_config: Provides the same configuration environment found when using the CLI, including commit, rollback, validate, and config diff

- arcos_command: Used to gather operational state from the device; returns structured data to Ansible

Implementing a NetDevOps framework with ArcOS

In this section, I will walk you through deploying a full NetDevOps pipeline to configure a full ArcOS topology, using open-source toolsets while adhering to the goals stated earlier.

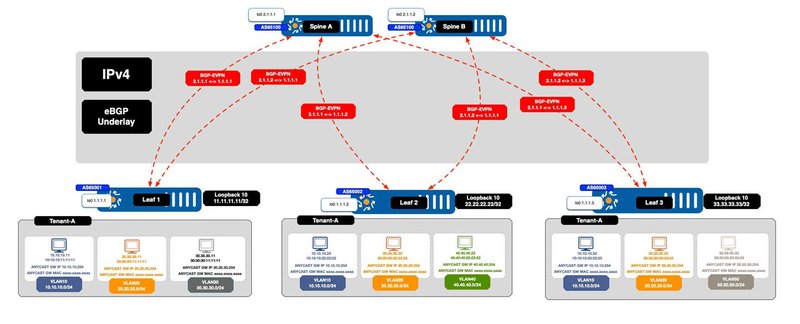

Fig. Sample Topology

Pushing ArcOS configuration changes with Ansible

In this example, I will be pushing all the needed configuration for 3 leaf nodes to be active in this EVPN VxLAN topology. The source of truth will be git (specifically GitLab in this case) and all configurations will be generated from a simple set of YAML files and pushed out to the devices via Ansible.

Let’s first examine how the configuration playbook is laid out:

CODE BLOCK HERE

The configuration playbook relies on Ansible roles to make the playbook flexible. Using Roles, aside from being an Ansible recommendation, make it easy to include or exclude specific configuration aspects depending on the workflow. Each of the roles shown above has a very similar architecture, therefore we can use one as an example. I am picking arcos-l2evpn role and here is the task list for that:

CODE BLOCK HERE

There are essentially 3 key steps here (all executed locally on the ArcOS node)

- Generate a candidate configuration from the XML template

- Apply the candidate config (don’t commit if in check mode)

- (Optionally) If in check-mode return the configuration diff instead of committing the config.

It is important to note that the role is generating an XML encoded configuration to be loaded in the ArcOS configuration daemon. XML encoded files are more efficient files for the configuration daemon to process and allows for a cleaner updating running configuration. We can abstract this detail away from the network operator by providing a template interface for the configuration. In this case, the arcos-l2evpn role will render the vlans list from the leaf group_var file, which is a very easy to read YAML file, into the correct XML encoding.

CODE BLOCK HERE

Using this approach, the same XML template will be used for each configuration push, ensuring predictable results. Each role shown in the main playbook follow this same structure, with the only difference being which variable file it will be using to render a candidate config. For example, the arcos-bgp role will be using a host_var defined BGP array since each node will have unique values for the BGP config:

CODE BLOCK HERE

The YAML data models show here are just a suggestion. They could be easily modified to fit a different source of truth or templating structure.

Ansible’s built-in features of templates and well-defined host attribute structure, makes it easier to write automation that are consistent, repeatable and error-free. This will allow the operations team to complete those dreaded weekend change windows and still have time enjoy their weekend.

Building the Complete NetDevOps pipeline

With the source playbooks complete, the next step is to build out the NetDevOps CI/CD pipeline:

Fig. NetDevOps CI/CD Pipeline

This pipeline is executed using Gitlab’s CI/CD environment which provides a single tool for source code/configuration repository and CI/CD pipeline executor. While alternative tools exist, converging on Gitlab allows us to limit the number of tools involved thereby simplifying the overall design.

Let’s examine the CI/CD pipeline stages:

CODE BLOCK HERE

As we traverse this pipeline through its 4 stages, a stage doesn’t run unless the prior stage is successful. The test and confirm stages get executed each time a commit is pushed to any branch, whereas the deploy and validate steps will only happen on a commit or merge into the master branch. This allows to run tests on many branches deploy the changes in a more controlled fashion using a single protected branch.

The test stage will consist of 3 steps:

CODE BLOCK HERE

- Spin up virtual topology that mimics the production setup. In this case, I am using Vagrant to manage the virtual topologies

- Run the ansible configuration playbook, this is the same configuration that will eventually be pushed to production in a later phase

- Run a set of validation steps on the virtual topology

A quick note on step3 – Using Ansible both for the configuration push and validation allows us to limit the number of toolsets in the pipeline in an effort to meet the goal of keeping things simple.

CODE BLOCK HERE

In the example shown above we validate the following, we:

- Validate cabling is correct by comparing LLDP neighbor output to a known good wire-map

- Validate BGP is up and has the correct number of neighbors

- Validate that overlay routes exist in the tenant VRF

After the virtual topology has been configured successfully the above validate playbook successfully execute each task, the CI/CD pipeline will call the confirm step. The confirm step is meant to generate a human readable config diff that will be applied after all the templates have been rendered.

Here is the pipeline configuration for this step:

CODE BLOCK HERE

The key part to this step is calling the ansible playbook with the –check and –diff flags. The ArcOS Ansible modules conform to Ansible check_mode by applying the candidate configuration to the system but not committing it. Instead the output of ‘show configuration diff’ is returned to the playbook. We are also utilizing Gitlab’s artifacts feature here and storing these config diffs for each hosts. This provides a convenient way for the neetwork operations team to look at the proposed config before it gets pushed into production in the next stage of the pipeline. If you are trying to rollout a network change on a Friday evening, you will appreciate the benefits of this. For example, the config diff for the arcos-evpn-global role for leaf1 in this case looks like:

CODE BLOCK HERE

Once the confirm stage is completed and the candidate branched is merged into master, the third step of the pipeline is started

CODE BLOCK HERE

This is the same Ansible playbook that was used in the test stage, but just run against a different group of devices. One other nicety that Gitlab provides is an environment variable that matches the commit hash of the given commit. That hash string can be passed into the arcos_config module’s comment parameter allowing it to be referenced in the devices commit list:

CODE BLOCK HERE

The final stage, validate, is the same Ansible validation playbook that was run against the virtual topology, this time executed against the production devices:

CODE BLOCK HERE

Summary

By using just Gitlab and Ansible we were able to use the NetDevOps concepts discussed earlier to realize a network automation pipeline with ArcOS. By leveraging these open-source tools, the network operations team can focus on delivering a streamlined set of configurations that are stored inputs to a consistent, repeatable, configuration process. This ultimately allows existing network infrastructure to change as rapidly as the business requirements demand.

Learn More

Check out the following demo video showing this pipeline in action:

Contact us to learn more about how we can help start your automation journey.